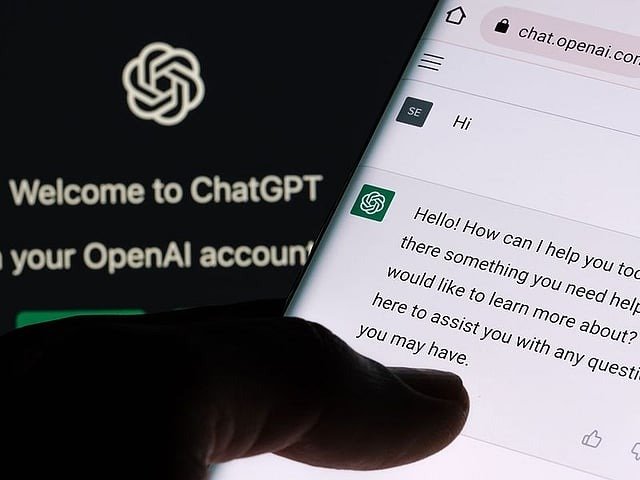

ChatGPT Can Hallucinate: Dubai Medical College Dean Urges Students to Verify AI Data

Dubai: Artificial intelligence (AI) is revolutionising education — but with power comes responsibility. A Dubai-based medical college dean has urged students to verify data generated by AI tools such as ChatGPT, warning that blind trust in technology could lead to academic and clinical errors.

Speaking at the ninth Gulf News Edufair Dubai 2025, Dr. Wafaa Al Johani, Dean of Batterjee Medical College, Dubai, highlighted both the promise and pitfalls of AI in higher education.

“AI, telecommunication, and all kinds of smart technologies are now mandatory and integral parts of medical education,” Dr. Al Johani said during a panel titled ‘From White Coats to Smart Care: Adapting to a New Era in Medicine’.

“But we have to be careful. It has positive and negative sides,” she cautioned, stressing the importance of academic integrity and ethical AI use.

AI hallucination — a growing concern

Dr. Al Johani explained that AI tools are now deeply embedded in healthcare education — from virtual patient simulations and digital anatomy labs to AI-assisted diagnostic systems.

However, she warned that generative AI systems like ChatGPT can sometimes produce incorrect or fabricated information, a phenomenon known as an “AI hallucination.”

“If you ask ChatGPT for a treatment plan or a medication regimen, it might give you a drug that’s no longer in use. Sometimes it even provides references that don’t exist,” she said.

Her message to students was clear: “You have to find the source, read the article, and verify the data before using it in your research or reports.”

Integrity and ethics in the AI era

According to Dr. Al Johani, the rise of AI means digital literacy, ethical awareness, and data integrity are no longer optional — they are core competencies for the next generation of healthcare professionals.

“We are seeing students submit assignments copied directly from ChatGPT. This is not learning,” she said.

“Students need to understand data privacy, confidentiality, and the ethics of using technology responsibly.”

Her comments echoed growing global concerns about the misuse of generative AI in academia, where institutions are struggling to balance innovation with authenticity.

Blending smart tools with human learning

At Batterjee Medical College, AI is being incorporated responsibly into the curriculum. Students train with high-fidelity mannequins and hybrid simulation models that combine digital and real patient interactions — bridging the gap between theory and clinical practice.

“These simulation-based training systems help students make better clinical decisions without risking patient safety,” Dr. Al Johani explained.

She added that the goal is not to replace human expertise but to enhance learning through technology.

“AI will never replace humans”

Dr. Al Johani also reminded students that AI should be viewed as a partner, not a threat.

“AI will never replace humans. It will replace those who are unable to use it,” she said.

“So, keep the doors open, stay curious, and keep learning.”

Her message resonated strongly with the audience, as students and educators alike grapple with how to integrate AI responsibly in classrooms and clinics.

Why AI literacy matters

Experts at Edufair 2025 agreed that AI literacy is becoming a key employability skill across all fields — especially in medicine, where precision and accountability are critical.

By encouraging critical thinking, fact-checking, and ethical awareness, educators like Dr. Al Johani aim to prepare students not just for tomorrow’s hospitals, but for a world where data drives every decision.

“Technology will keep evolving,” she concluded. “But it’s our human judgment and integrity that will always define real intelligence.”

Related News